Thank you for taking the time to read this and I hope you walk away with some insight into the challenges and questions being asked in the Technology world that dominates us all right now. If you enjoyed this post, please do share with your network.

'Fake News' is the new lexicon of the millennia and will have damaging consequences for generations to come. Misinformation can be really damaging if we aren't careful, so we have to take steps to prevent it.

As previously discussed in my podcast, the combination of conspiracy theories and Trump's constant spewing of misinformation culminated into the Capitol being raided by domestic terrorists (rioters, thugs, criminals, whatever you want to call them, but they are definitely not protesters). Misinformation, fake news, alternative truths or lies are bad (obviously). But why do people spread these lies in the first place?

Why do people spread lies in the first place?

There are generally 3 reasons that drive this:

Collective sense making

This is generally when something happens that leaves more questions than answers so people search for answers. People work together and come to a conclusion - turns out if you don’t have all the information in a given situation, you can come to wild conclusions. Our brains really don’t like not knowing the full picture and will do anything it can to fill in the gaps even if it’s to our detriment. You really can’t blame people for wanting answers - I think everyone at some point bought into a collective sense making theory, however harmless or harmful it was.

Epistemic bubbles (or forming bubbles of like-minded people)

Humans are also very social creatures and have been since our existence existed in communities and bubbles. The same idea can be applied to social structures which have inadequate coverage through a process of exclusion by omission - epistemic bubble. If you find a group of people who think like you, you become comfortable in that circle and are less likely to venture out of that circle. I mean why would you, you’re comfortable? It can also mean that if someone in the like-minded group says something, you are more likely to believe them. I mean, that’s kinda true with anyone. If someone whose opinion I’ve learnt to trust says that too much screen time can turn my eyes square, I would think ‘I trust this person as I normally agree with what they say, so there must be some sort of truth to this’. It’s easy to get sucked into a fake theory that is based on some loose truths, because a group that you relate to all agree with this fake theory.

Echo Chambers

This compliments the previous point. When you join a group of people that think like you and share your values, you naturally enter an environment that basically reflects and reinforces what you believe. When something comes along that contradicts that, there can be a disturbance in the peace that exists in the group. People are more likely to band together and stamp this out to restore order and peace. This can stop constructive discussions and conversation. It can also lead to conspiracy theories being amplified.

Try going into the ‘Privacy’ subreddit and telling them that data collection is legitimate and needed for free services, and therefore, companies should have free reign over your information. It doesn’t matter if that is right or wrong, that community will eat you alive. You are actively going against what they believe and they might not engage with you in that conversation. Throw in a lie that reinforces the dodgy practices the big boys do, then you might get a different reception.

So why do people care about it now?

Conspiracy theories have always been around, but it wasn’t at the front and center of anything. The 2016 US election was when misinformation was starting to be taken seriously in the west. Before then it was conspiracy theorists were largely ignored more because they didn't cause much harm to themselves (believing things like the world is flat generally doesn't harm those around you, only the individuals who hold the belief themselves). Or, if it was damaging, it didn't affect rich people. There was less of an incentive to tackle the misinformation back then. But now that has changed because the democracy of the rich is in jeopardy, so now action has to be taken. At the moment, the action is to label debunked theories and stories. The fundamental aim of these labels is to stop the spread of misinformation. But are they working?

No, labels do not work

I should preface this with the fact that there is no conclusive proof of whether the labels work or not but that header made a catchy title. We need time to pass and people significantly more intelligent than me to conduct lots of experiments and write them up, only for me to take nuggets away and write a Substack post about it. However, early studies show that when social media giants started labelling and fact checking things, it made people believe more fake stories, just different ones. By labelling things, it created a binary situation.

What this system had set up was if a story had a label that it's debunked, then it was a fake story. However, if it didn't have a label then it isn't exactly confirmed if it is debunked or not. Yeah, not clear at all. Most normal people jumped to the conclusion that if a label was not applied on the story, then it was true. This meant that fake information was still being spread.

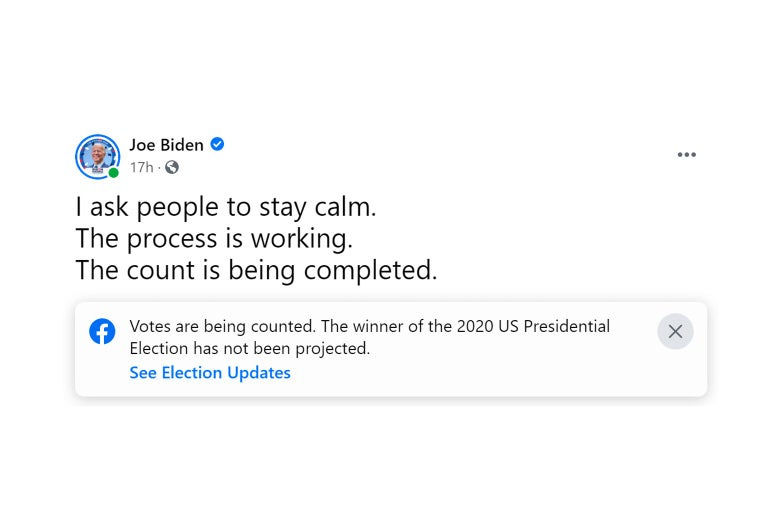

Other labelling techniques from platforms didn’t really help either. Facebook basically put very general labels on everything related to a specific topic. For example, it didn't differentiate between whether the post was a lie or not during the election. So, if Trump said 'I won the election' and Biden said 'We will count all the votes', Facebook essentially put the same label of something along the lines of 'The vote hasn't exactly been confirmed yet'. This can be confusing to some, as it might not be obviously clear to what's happening.

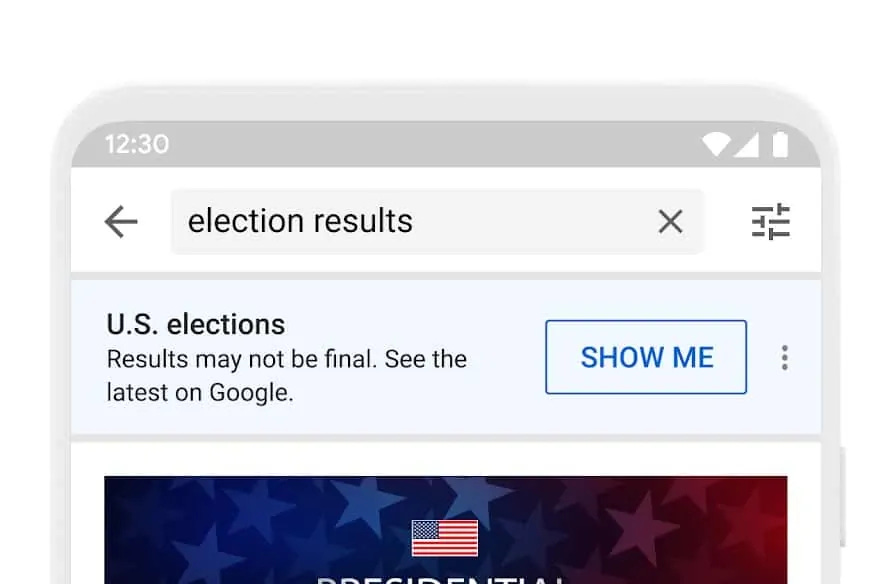

YouTube took a similar approach of putting a vague 'Hey this election may or may not be still happening who knows click here to go to another website that is also owned by myself'.

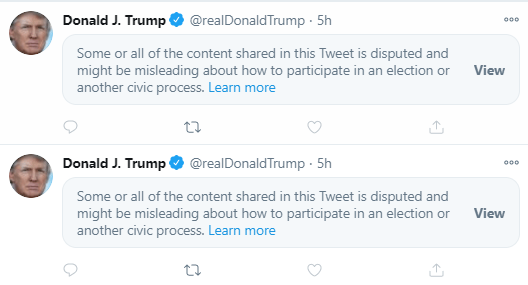

Twitter took the stance of potentially hiding the more outrageous tweets from twitter users. Users had to actively click to see some Trump's tweets, causing friction within the user interface.

Of the 3, Twitter’s method is the most likely to prevent misinformation spreading, as it causes friction between the users and the tweet. They're more likely to reconsider. Have you ever put a timer on your social media app on your phone? When you have used up the 30 mins limit you set yourself within the first part of your day, when you go to open the app again, you get a notification saying you've ran out of time. That prompt subconsciously makes you think whether you should open the app again. Now you might ignore it, but you had to think and acknowledge the decision you were making. That's enough to deter some people (more on this below).

So, labelling and fact checking doesn’t always work and on top of that not particularly efficient and can even play into the hands of the misinformation spreaders, so what can you do?

What science says we should do to prevent fake news spreading

Education

Teach people what fake news is. Studies have shown that spending some time to teach the common tricks that bad actors use to spread misinformation is very effective. Not only do people start to recognise bad news, but the more people do it, the more obvious it becomes for the reader. From personal experience I can definitely agree with this. The biggest issue I find when someone express an interest in a conspiracy theory is that they are ridiculed, which naturally makes the individual retreat and not share this belief with others that disagree with them. I’ve found, where possible, it is better to question the logic behind their theory and eventually they do realise why the theory might be a bit wrong. Once you’ve pointed out the flaws, they’re super obvious.

Promoting fact-based news sources

By promoting fact-based news sources - or generally ‘trusted’ news sources - readers are exposed to better news sources, and therefore, better (or more face based) news. It also can prevent conspiracy theory bubbles being formed. Promoting ‘good’ news sources has been tried already, but apparently it isn’t working. I like this idea, but I think there is some caution to be taken. The idea of ‘trusted’ news sources essentially depends on who classifies the news source. We can easily fall back into the trap of a select few individuals determining what news outlets get promoted and then we are back in the cycle of people feeling like reporting is being censored by not promoting a diverse set of sources.

In addition, the current set-up of news sources might not be the best system, as it inherits any biases that exist in society right now. If there is a voice that feels incorrectly represented and they try to start an independent outlet to challenge mainstream ‘trusted’ sources, then they might have a tough time being seen.

Make it harder to spread the news

By making it harder, or adding friction, you’re adding in more hurdles and steps for the individual to share something. With minimal friction of sharing items (something Twitter and Facebook even encouraged with the inherent design of the platform) it’s easy to share an article that you find interesting, relate to or want to start a conversation about, even if you are not 100% sure on the accuracy of the information in the article. Similar to the point earlier with hiding Trump’s tweets, where Twitter created friction. By adding in friction, it can make you think twice (but more on it’s effectiveness further down).

Emphasise the morality of the action of spreading fake news

I’ve commented on the topic of morality before. It’s not a clear-cut conversation, but the argument is that generally it can be considered unethical to share a bit of fake news. By instilling that ideology into society, people are less likely to want to share it. Constantly being exposed to fake news can cause people can become desensitised to it. By holding each other accountable, you can start to push people to do the right thing (according to your standards).

So what next?

Personally, I’m doubtful of promoting ‘fact-based’ news sources and pushing the moral argument. If people really don’t care, they will still do what they want. Humans are inherently selfish, with the differentiating factor being whether their selfishness harms others or not. You can be selfish and cut in line, or you can let someone go in front of you. By letting someone go in front of you, you are being nice or you feel good as you did the good thing. Net positive to both things is that you feel good (letting someone go in front) or get what you want (you get closer to the front of the queue without waiting). You do both actions for yourself.

Asking someone to not share a post because it might be fake won’t work as, if the post aligns with their ideology, they won’t care. People can shun the conspiracy believers and make them feel bad, but all that does is push those people into a circle where their thoughts and ideologies are aligned and unopposed (allowing thoughts to foster). Next thing you know they’re taking down 5G towers. I don’t like the idea of news corporates branding themselves as ‘fact-based’ and being asked to be promoted further - you end up where you started, with one person controlling majority of the news.

There are two main actions that need to be done - preventative and reactive. Preventative is education. Education in health, science and deception tactics used by misinformation spreaders. According to Nathan Walters, PhD:

The lack of understanding creates a vacuum that seemingly anybody with a phone camera and internet connection can fill. This leaves the public questioning who they can trust and what qualifies someone as an expert.

There needs to be nationwide campaigns to train adults on the tropes of misinformation as well as incorporating it into school courses for children. These education resources need to be accessible and available in as many languages as possible. Not everyone has the privilege to go to school, or if they do, they might not have been lucky enough to live in a stable environment to allow them to learn. These people are also the most vulnerable.

The next step, reactive, will take place in many forms. It will require many organisations working together. It will be an effort of governments taking steps to restrict fake news (which does have support in the US. I tried to find the sentiment in other countries but couldn’t find any studies, please do let me know if you do) as well as platforms like Twitter and Facebook taking steps to add friction to make us think before we share (like forcing us to quote retweet which they reversed, or prompting people to read an article before sharing).

Twitter has recently launched Birdwatch, which I have talked about briefly in my podcast, which is a crowd sourced initiative to educate twitter users on fake news. There will be a small group of people in the Birdwatch program that can label and add context around tweets, which can then be upvoted or downvoted by other Birdwatch members. This way multiple people can contribute to the context from many angles and the best one will be the top voted one. Crowd activity relies on humans on average wanting to do (and actually doing) the right thing - and this works with Reddit with their moderation and many other social media sites, so it’s worth a shot. Birdwatch is in its early days and as of writing only 1000 people are in the scheme, and it’s solely based in the US. No one knows if it will work, and people have started to point out flaws in the program. But that’s expected. This is the start of tackling the next challenge of the decade, I haven’t even touched on the problem with deepfakes - as well as any other ways misinformation will find its way to us.

Twitter Vice President of Product Keith Coleman wrote:

We know this might be messy and have problems at times, but we believe this is a model worth trying

I agree. This is a tough problem. It will be tough and messy, and some attempts will really fail spectacularly, but that doesn't mean we should stop trying.

We've seen what can happen if we don't try enough.

If you have a better idea than I do, if I’ve missed out anything or you think I am talking absolute rubbish, regardless if it’s positive or negative feedback, feel free to reach out either by commenting on the post, or by emailing me on tanvirtalks@substack.com

If you enjoyed this post, subscribe to Tanvir Talks, where I publish a podcast twice a month and a newsletter once a month breaking down the big questions asked in tech into digestible chunks for you to consumer, the average consumer.