Ethics in AI: Why isn't Google innovating on Search?

The problems with ChatGPT

If you’ve been anywhere near Twitter or other social media platform in the last few weeks or so, you’d be aware of the new tool that was released into the wild with no fanfare, ChatGPT. You can ask ChatGPT a question and it will respond to you as though you are having a conversation. It’s currently in beta mode so that they can refine the model before some business model will be implemented (I would assume, anyway, this stuff is expensive - with one estimate hitting up to $3 million a month).

ChatGPT is made by a company called ‘OpenAI’ who have the following mission statement on their about page:

OpenAI’s mission is to ensure that artificial general intelligence (AGI)—by which we mean highly autonomous systems that outperform humans at most economically valuable work—benefits all of humanity.

We will attempt to directly build safe and beneficial AGI, but will also consider our mission fulfilled if our work aids others to achieve this outcome.

They have had two big hitters in the consumer market recently: ChatGPT, which generates text in conversational language given a prompt (which we will explore in this post), and DALL E, an AI model that generates images from a prompt.

People instantly started using ChatGPT as a search engine - asking things in ChatGPT instead of Google and it gave satisfying results without having to go through links. The popular question that was being asked on my social feeds was ‘Why isn’t Google doing anything about this?’.

Since the release of ChatGPT, it has been incorporated into a challenger search engine, You.com. So what is Google doing? Why isn’t hasn’t it incorporated this stuff in already?

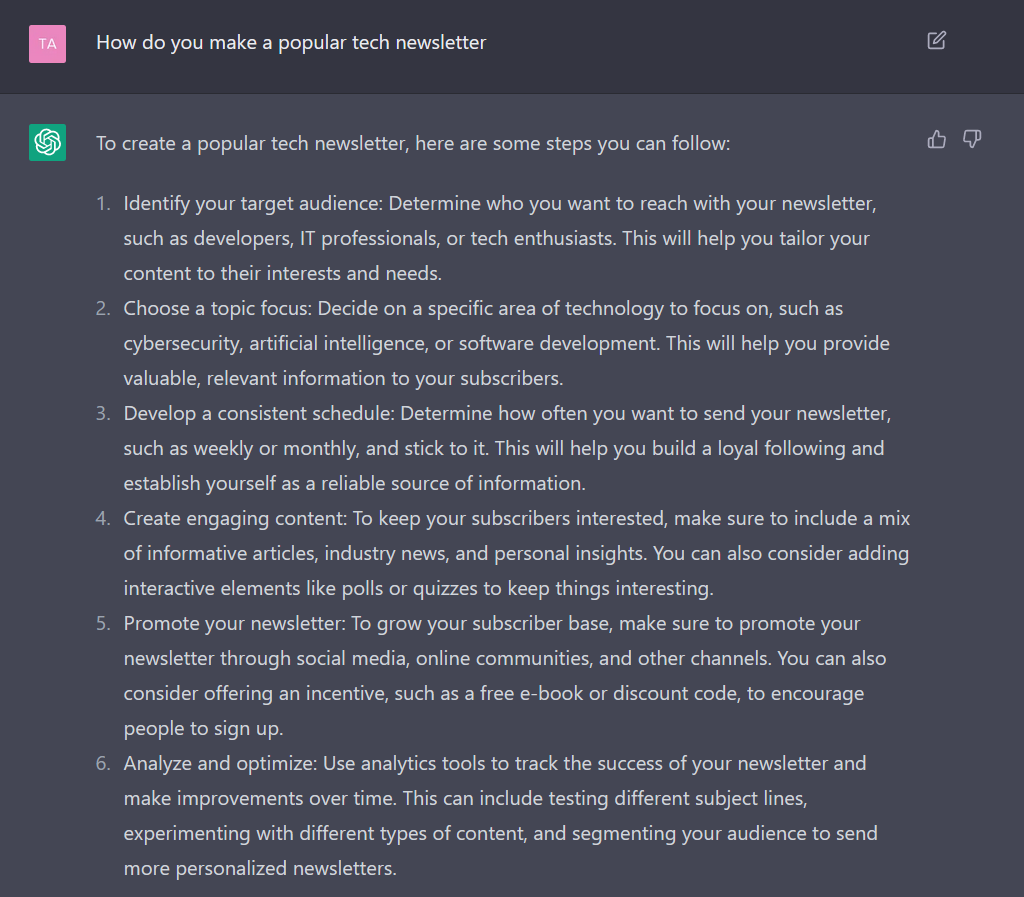

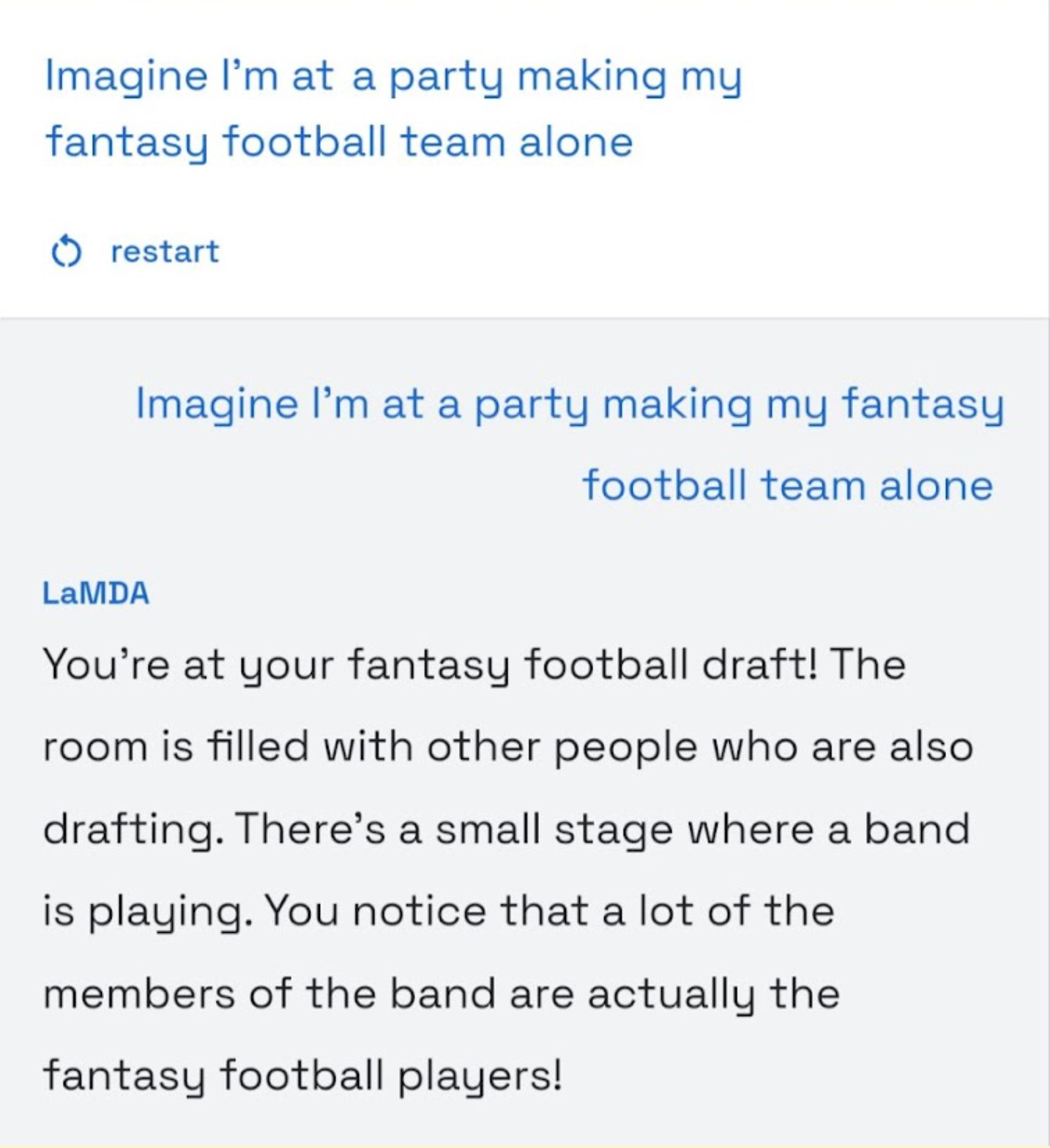

Language models can’t create content or think for you

When working with these language models (known as Large Language Model, or LLM) it’s easy to forget that they aren’t ‘alive’ - even the AI researchers can forget sometimes. Google has their own LLM, LaMDA (You can sign up to test it if you want). It has the same base of ChatGPT, but it’s aimed at making more conversational dialogue rather than what ChatGPT outputs. I gave both models the same prompt, and the difference in the models shows that ChatGPT gives prose, whereas LaMDA gives a conversation.

The main thing to note though is that’s all these models are - language models. ChatGPT doesn’t search the internet, nor does LaMDA. The way they have been made is that they have been trained on all the open internet (for Chat GPT up to 2021) to understand how people talk and write prose. Given the prompt, the model uses statistics to determine the next word that people have written in the past.

Neither models know what they are talking about. What they can see though is that statistically, these words output are the most likely combination of words given the prompt. But this isn’t the first time we have seen a model like this.

Google made a voice assistant tool similar to ChatGPT that people weren’t happy about

Back in 2018 in the annual Google IO developer conference, Google Duplex was announced. It was a voice assistant that could talk very convincingly like a human due to its natural tone of voice, as well as filling in filler words like ‘uh’, ‘hmm’ etc. The demonstration is great and I would recommend you watch the video. In the video they call up a restaurant and place a reservation.

But shortly after its release, many people raised concerns that this is ethically dubious and that humans should know when they are interacting with a bot. People didn’t like the idea that you could answer the phone and be talking to a Google Assistant, it made people feel uncomfortable, especially coming from a Big Tech company such as Google. Google made changes to the Duplex model off the feedback, for example announcing that people are interacting with a bot.

This is the challenge Google has. Due to its past of its data handling practices and the many lawsuits that followed (a quick wikipedia search will tell you there are many), Google is under increasing scrutiny whenever they announce anything. This is the same with other big tech, such as Meta (formerly known as Facebook). When they announced their Portal device, which was a video calling device, data privacy concerns were raised straight away.

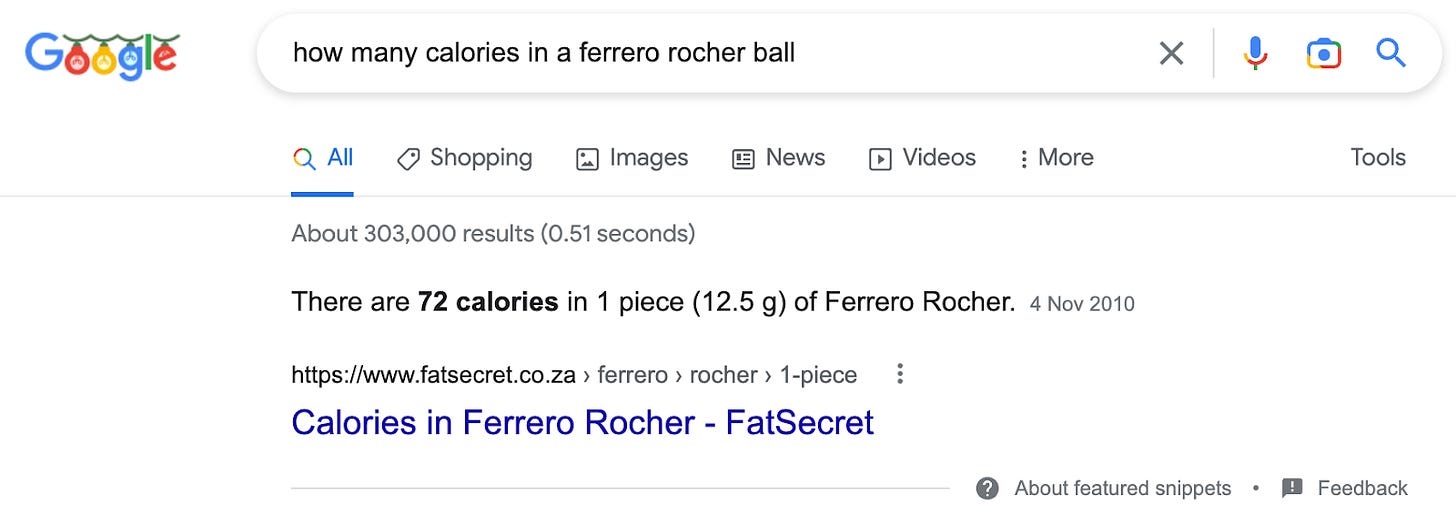

In addition to the scepticism of new AI and machine learning projects coming out of Google, Google is under constant battles against content providers. Google search engine’s primary objective was to give you links, but our use of the internet has evolved to us wanting the answer as soon as we search. This is why when you search for something in Google now, you get an information snippet that gives you the answer to your search that it has gathered from other websites.

This normally stops the user search flow and the user carries on with their day, harming the original website who now doesn’t get the traffic it used to due to the user not clicking the original link anymore, reducing the adsense revenue .

This is a point of contention and publishers do try to push back against this with varied success. In Australia, Google now has to pay news publishers if they want to show their results (whether that money goes to journalists is another question). However, in March 2022, the music lyric provider Genius, lost their case against Google claiming that Google scrapes the lyrics off their site and therefore prevents traffic to Genius.

Ultimately, it’s better for the user experience if Google gives the answer straight away, but it’s damaging to the publishers and content creators that if something isn’t agreed, then the incentive to create content and publish will be gone. Google won’t have anything to display.

Now, can you imagine if Google released a similar tool to ChatGPT and told the internet that they trained the data off everything people have written on the internet? Also, with what it outputs, anyone can copy and paste the output from this tool and people won’t know whether an AI or a human wrote the text? Do you think they’d get away with it?

Ethics of ChatGPT

Due to the fact OpenAI does not have this tainted reputation as Google, I’d argue that they have gotten away with a large amount of scrutiny with what they are doing to train their models.

I’ve written about ethics in AI before, about the environmental challenges of training AI and the way the data is collected. Essentially, data may be collected and used without the consent of the people the data was collected from. Both points apply to ChatGPT.

The model is still in early days so I don’t expect this information to be available publicly, but some of the answers I would like to know about ChatGPT are:

What environmental controls have they put in? What considerations are they putting in place to reduce the carbon impact of their project?

What steps have they taken to remove bias from the internet data they have trained it on? Written information on the internet can have a multitude of biases as well as some biases existing due to the lack of information (such as data from women/ethnic minorities not being collected as extensively)? This Twitter thread would imply this hasn’t been considered yet.

How are they making decisions on what you can and can’t ask it? ChatGPT has taken some steps to prevent abuse, but in the early days you could literally ask it how to shoplift and make explosives. Can you imagine if Google released a tool like this? The biggest challenge will come when there is a top with competing theories, like whether COVID was made in a lab or not (Spoiler: we don’t really know, but it is deemed unlikely). What will ChatGPT say then?

Open AI’s other project, DALL E, also has its own ethical concerns of its impact in the art industry, and I’d like to see the answers to the questions above for DALL E too.

I’m not against these AI projects, I actually think they’re really cool and can’t wait to see how they are utilised to make our lives easier, but we’ve sped ahead with innovation before, and it has hurt society for the worse. These models will require constant updating with content we create, so I don’t see ChatGPT becoming a search engine any time soon. Google understandably is taking a slower approach as they can’t afford missteps like Open AI can.

But one thing is for sure - these models just aggregate the brilliance of the human mind and without those creations, these models are just a snapshot of information in time. We have a long way to go until AI replaces us.

If you have a better idea than I do, if I’ve missed out anything or you think I am talking absolute rubbish, feel free to reach out either by commenting on the post, or by emailing me on tanvirtalks@substack.com